How to Stop AI

I believe one of the great battle lines of the future will be between artificialists who’d support improving AI and sapienists who won’t.

The two will largely divide along our existing ideological lines where leftist technocrats leveraging the poor will start with a competitive advantage over the American middle class, but as AI improves it could lift more Americans into the middle class, therefore, strengthening our ability and will to stop it or it could be used to exacerbate inequality so that artificialists can continue to hold the door open to oblivion.

Artificialists will want to keep the door open to a self-generating monster even as the risks of AI increasingly outweigh the rewards for a variety of reasons: curiosity, hedonism, learned helplessness, misanthropism, thanatophobia, AI worship, etc.

But I think before AI could destroy us, a foreign power would dominate us.

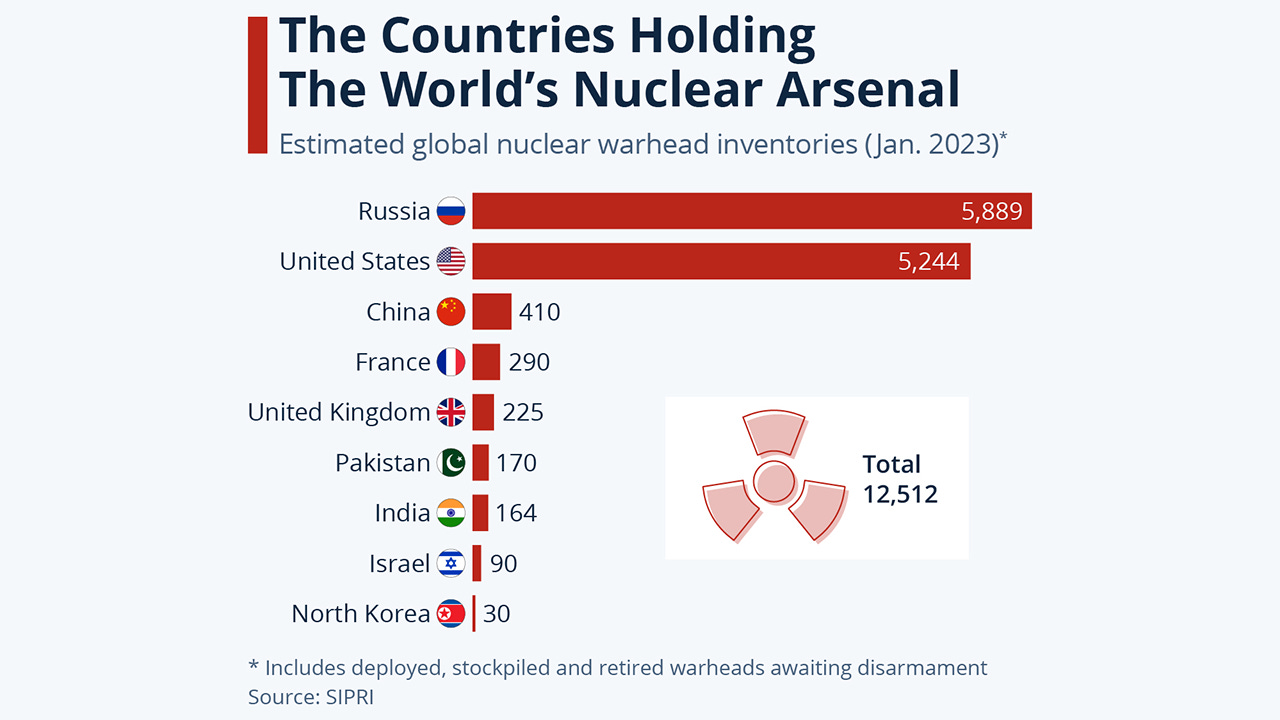

You see, during the Cold War, the US and the USSR both had enough nukes to destroy the other, but they didn’t do so because the other would’ve had enough time to launch a retaliatory attack, i.e. mutually assured destruction.

With AI, however, WW3 could be won with the flip of a switch.

AI advisor: “Hi Mr. President, if we hack China we have a 99% chance of taking it over whereas if China hack’s first they have a 96% chance of taking us over. Would you like to preemptively hack or put your faith in the Chinese Communist Party that they won’t do so either?”

AI will tip the offense-defense balance in favor of offense or at least because of its black box fast-improving potentially all-powerful nature no nation could be sure it hasn’t already been tipped, therefore, they’ll be forced to act as if it has.

I hope, believe, and will fight for America to win the AI arms race so that we can stop AI on our terms.

And then rather than taking over the world with it, I recommend we “encourage” our peers to sign a non-development treaty similar to what we did with nuclear weapons, except here, we’d be much more effective at enforcing compliance because this AI would be the ultimate surveillance/sabotage tool.

What should this treaty look like?

I’m flexible on the particulars, but just like with John Adam’s essay, Thoughts on Government, I hope this rough outline can inspire a new world opportunity.

The treaty should create an Artificial Intelligence Agency (AIA) whose mission should be to enforce limits on artificial intelligence, which would entail parameter caps, safety regulations, and providing temporary exceptions in some areas such as healthcare.

In order for a country to be able to join this agency it must meet some basic requirements such as: free speech, term limits, and be in the top 100 of literacy, numeracy, 2-parent households, and homeownership.

The more powerful a group is the more imperative its members be responsible.

Some of the overall provisions of the treaty should consist of a balanced budget requirement, annual % change in the budget capped at 2%, auto declassification at 50 years, no sabotaging an entity for more than 3 years in a row, no nation-building, laws sunset after 30 years, and “the eye” can only inform about AI breaches, e.g. not about WMDs, terrorist attack plans, politicians’ indiscretions, etc.

The AIA should consist of 3 branches: Executive, Legislative, and Judicial.

The executive council should have 5 members who serve a 4-year term (maximum 2) where 3 are appointed by the US president and confirmed by the US Senate and 2 are appointed by the legislative council.

The legislative council should have a maximum of 50 members who serve a 6-year term (maximum 2) where if the total number of qualified countries exceeds 50 then they’d rotate seat order based on their admission date.

The judicial council should have 9 members who serve for a single 18-year term (every 2 years one is replaced) where 5 are appointed by the US president and confirmed by the US Senate and 4 by the legislative council.

Finally, AIA employees should be highly compartmentalized where they have to take a recurring lie detector test and serve a maximum of 12 years.

A general overview of operations is the executive and legislative branches would vote for a budget. The executive would grant licenses, assess the world for regulatory compliance, and upon discovering a breach carry out a full investigation, which could involve ever less subtly sabotaging a facility in part to slow down their research and to gather more evidence of intentional wrongdoing, and then to arrest the rule-breakers. They’d then be tried by the judicial branch at which point they could have their licenses revoked, be fined, and/or imprisoned.

If a nation refuses to hand over the rule-breakers or is behind it then the executive and legislative councils could pass ever greater penalties starting with revoking all or some of the country’s AI licensing, then sanctions, then deposing of their head of government, and then finally dissolving their government. The AIA would have a small elite military force to carry out these steps if it ever came to it and could request additional troops to form a multilateral coalition.

I tried to strike a balance between American unilateralism and qualified multilateralism. Some on the Left will feel I’ve given the US too much power, but realistically the US will be the one who creates this eye so we aren’t going to give up complete control over it unless we retain disproportionate power and some on the Right will feel we shouldn’t give up any control, but unless we’re willing to force the world to bend to our will, therefore, becoming the imperialist colonizer we pride ourselves on not being then we have no choice but to entice nations to voluntarily sign on to our non-development treaty by offering them some control.

Plus, it’s untenable for a nation to put humanity’s interest over its own where, for example, Americans will legitimately ask, “Why is it our sole responsibility to stop AI from destroying humanity?!” since many of us have an aversion to being “the world police,” but whether you like it or not we will continue to have world policing to stop world-destroying technologies so then the only question that remains is, “Should we support a niche well-checked international organization to stop AI in our interest or abdicate responsibility to a secret global elite to stop AI in their interest?”

I also tried to strike a balance between avoiding replication and corruption. The AIA should be opaque and capable enough that it’d be difficult to replicate its AI yet transparent and constrained enough that it’d be difficult to corrupt its members. I think it makes more sense to err on the side of preventing replication because if a foreign entity or secret elite can surpass the eye then it’s more likely to tyrannize than for an amalgamation of the most qualified nations beholden to a limited mission and powers.

In the end, maybe AI development will dramatically slow down due to unforeseen technological hurdles and/or because AI research could get less funding due to diminishing returns, but either way, our #1 foreign policy objective should be to win the AI arms race so that like with nuclear energy, we don’t use it to summon death, but bring about a better life.

Footnotes:

1. The balance budget requirement should include a robust rainy day fund, which could be unlocked in case of an emergency with a 2/3rd vote.

2. The AIA should be awarded to the U.S. state that most meets the aforementioned qualifications and be built as a large glass sphere within a large public park within a medium-to-large city to symbolize the importance of transparency and nature.

3. The U.S. doesn’t currently meet the 2-parent household requirement, which means if the AIA was implemented tomorrow it wouldn’t qualify for the legislative council. This would be just one more incentive for why we should increase 2-parent households. The reason I believe it’s an important qualification to include is because it more than arguably any other metric shows how pro-child a society is and therefore pro-human. Children desperately want to be raised by their biological mother and father. Yes, there are extenuating circumstances where this isn’t possible, but they should be rare.

4. There should be an auto continuing resolution (ACR) where if the budget doesn’t get a majority vote then they’d just fund the AIA at the previous year’s levels.

5. Budgetary constraints probably should be phased in slowly so that in the early years it can increase by more than 2% a year.

6. All laws and regulations must be read by elected officials. They must sign an affidavit swearing to have read the charter/laws before being sworn in and then again before voting on a new piece of legislation.

7. If the eye reports about something beyond an AI breach then whoever heard it must report it to the Inspector General and a full investigation must be had into how it came to be.

8. There should be strong whistleblower protections.

9. As I addressed in my previous video: the AIA should cap AI parameters, GPU, terabytes, etc. at XY% less than “the eye” and then degrade further based on improvements in quality of life metrics.

10. Auto declassification at 50 years, except related to the intellectual property of the eye itself.

11. No-nation building, but then what happens in the extremely unlikely scenario the AIA dissolves a government? Not the AIA’s responsibility to sort out.

12. The executive board would nominate to head the executive departments: Secretary General, Secretary of Assessment, Secretary of Research, Secretary of Justice, Secretary of Defense, and an Inspector General. The executive board would constitutionally be required to vote according to the aforementioned chart as well as on any substantial issue or conflict or on anything it so pleases where it could override a secretary’s decision with a majority vote.

13. The Secretary General would help oversee the overall administration and organize executive board meetings as a non-voting member. The Secretary of Assessment would oversee nations’ AI programs and licensing. The Secretary of Research would facilitate AI information similar to how the IAEA isn’t just anti-nuclear weapons, but pro-nuclear energy… the AIA would be for capping AI, but within the cap it’d be pro-safe-and-human-life-enhancing AI. The Secretary of Justice would oversee investigations, arrests, and prosecutions. The Secretary of Defense would oversee diplomacy and defense. The Inspector General would be internally focused via auditing and evaluations. I’m open minded on this front to the exact number and types of departments.

14. Cabinet secretaries should serve a maximum of 8 years so that they can’t serve longer than a board member, but since they’re also considered AIA employees they could serve another 4 years in a different Cabinet role or a lower level employee position.

15. Executive board meetings should at least be monthly or weekly.

16. Arrests must be made public & brought to trial within 90 days with a jury of their peers determining their guilt.

17. Ideally, in order to qualify for the AIA a country’s head of government must have term limits, but since there are many developed countries that de jure don’t, but de facto seem to (aka UK hasn’t had a prime minister that has served for more than 12 years in the last 200 years) then the qualification could be written as: “a country’s head of government cannot serve more than 12 years consecutively or else that country is automatically disqualified from the AIA.”

18. The reason countries would want to join the AIA is because they’ll get access to the most cutting-edge pro-human AI and get some power/insight over the AIA’s decision-making (the carrot) and if they don’t join then they’ll lose access to much of existing AI’s, not get AI development licenses, face increasing sanctions, be shamed for not doing their part to protect humanity from extinction, and for no country to join is to effectively argue for the US to retain monopoly control over its eye (the stick).

19. If 51+ countries qualify then rotate on the legislative council based on admission date + ensure regional diversity so that in any given year each region (Africa, Asia, Europe, North America, and South America/Oceania) has at least 9 senators.

20. Sanctions are binding, which means if the AIA enacts a 5% sanction on China then all member states are legally obligated to impose it.

21. No sabotaging for more than 4 years in a row. It should start as minimal and subtle as necessary to slow down the suspects and then slowly increase to build a case against them, but in order for the executive board to not indefinitely kick the can down the road they’d be capped at 4 years so that they’d then either have to make the arrest if they’ve compiled enough evidence to justify it or back off for at least 4 years before they could recommence sabotaging.

22. The executive council may need to keep their early operations (suspected breach, investigation, sabotage, arrest operation) secret from even the legislative and judicial council, but even this top-secret information must be shared with 3 senators and 3 justices (leg/jud seats randomly rotate every 4 years) whereby with a majority vote the “Super 6 ” could vote to share this information with the rest of the AIA if they believe something about it is unconstitutional or extremely reckless, therefore, in need of being checked.

23. Countries should contribute to the budget as a percentage of their population since virtually every human would be deriving the same benefit from the AIA.

24. If the AIA lowers the cap on AI, therefore, making some existing AI’s illegal then the AIA should compensate said company/government for some of its economic loss.

This is quite a comprehensive proposal - almost a UN redux. Let me just speak to the AI technology aspects.

First, let's look at some of the context that will define the coming decades:

1) Human population is crashing already. Using human bodies to support industrial production is on the way out. China is planning to replace 1 in 50 workers with robots by 2025. That's 35 million new robots. Exponential growth follows. Robots are massively cheaper and more productive than humans - no need for cars, sleep, health care, vacations, unions, air, etc. The profits generated, even selling into shrinking human markets, will support social welfare for the dieoff of the last of the peak population group (like us). And it will support huge wealth increase for all concerned. The human population may shrivel without any aggression by the AIs.

2) The imperialism of the agricultural and industrial revolutions was driven by access to material resources. Today, the critical resources are technical and intellectual. Physical aggression is increasingly unprofitable due to the global nature of supply chains, as we see in the current war in Ukraine. Also, that massive steel tanks intimidating docile populations is a thing of the past. Will we reach a point of mutually assured drone destruction? I think so, in one way or another. And on to your proposal.

3) The AI technical revolution is advancing orders of magnitude faster than any institutional body could regulate. The arms race is already under way with TSMC building a plant in Airzona to reduce the impact and incentive of Taiwan falling to the Chinese. The AIs will be used predominantly for industrial and business functions. Yes, war will change dramatically, but will continue.

4) In 1608 Galileo set up his telescope in the town square so anyone could see that the earth was not the center of the universe. However, we persist in thinking that us humans are the pinnacle of intellectual evolution. Now we are finding out that there are an infinite number of possible intellects, some of which will be far smarter than humans on some degrees of measurement. Within a few years, we will be conversing with the elephants, whales, dolphins and even ants. Just like with Galileo, our world view is changing dramatically. Just as today we revel in the vastness of the universe, in the future we will revel in the multitudes of intelligences that will make all of us much, much smarter - and richer.

5) The AIs have no inherent motivation to kill off humans. They might well intervene to prevent us from killing off each other. They will realize, just like we have, that our existance on Earth is fragile and subject to some random disaster like the pole shift now taking place, so I predict they will turn their energy to populating the solar system, just as Musk is preparing for. In the meantime, we need to completely redesign our electrical grids, burying them underground for protection from the inevitable solar flare that could wipe out all electrical devices in a few hours. There is a lot to do and we need all the help we can get - or invent.

Be careful what you ask for Anthony... :)